Engineers create 3D-printed objects that sense how a user is interacting with them

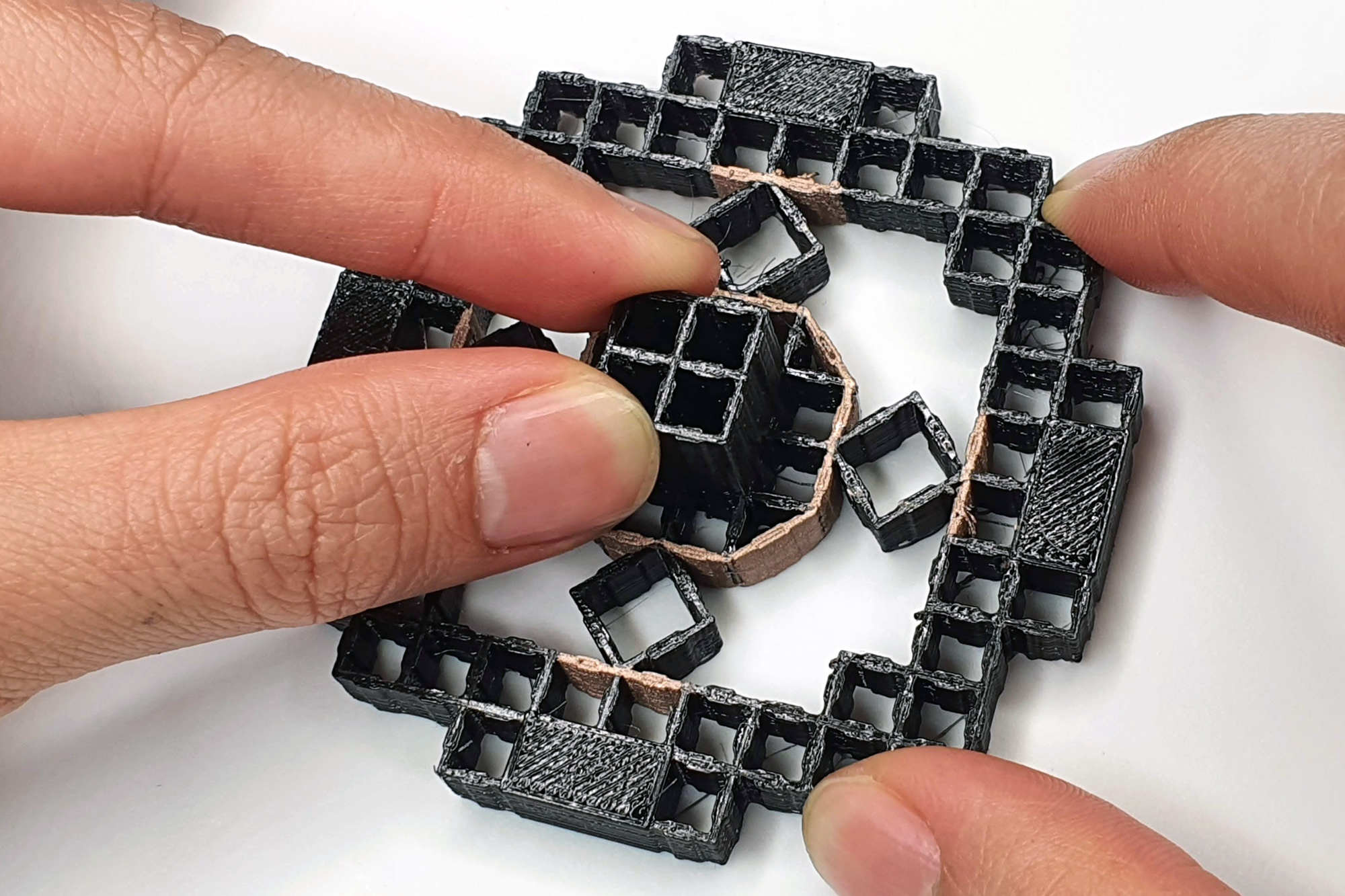

MIT researchers have developed a new method to 3D print mechanisms that detect how force is being applied to an object. The structures are made from a single piece of material, so they can be rapidly prototyped. A designer could use this method to 3D print “interactive input devices,” like a joystick, switch, or handheld controller, in one go.

To accomplish this, the researchers integrated electrodes into structures made from metamaterials, which are materials divided into a grid of repeating cells. They also created editing software that helps users build these interactive devices.

“Metamaterials can support different mechanical functionalities. But if we create a metamaterial door handle, can we also know that the door handle is being rotated, and if so, by how many degrees? If you have special sensing requirements, our work enables you to customize a mechanism to meet your needs,” says co-lead author Jun Gong, a former visiting PhD student at MIT who is now a research scientist at Apple.

Gong wrote the paper alongside fellow lead authors Olivia Seow, a graduate student in the MIT Department of Electrical Engineering and Computer Science (EECS), and Cedric Honnet, a research assistant in the MIT Media Lab. Other co-authors are MIT graduate student Jack Forman and senior author Stefanie Mueller, who is an associate professor in EECS and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL). The research will be presented at the Association for Computing Machinery Symposium on User Interface Software and Technology next month.

“What I find most exciting about the project is the capability to integrate sensing directly into the material structure of objects. This will enable new intelligent environments in which our objects can sense each interaction with them,” Mueller says. “For instance, a chair or couch made from our smart material could detect the user’s body when the user sits on it and either use it to query particular functions (such as turning on the light or TV) or to collect data for later analysis (such as detecting and correcting body posture).”

To accomplish this, the researchers integrated electrodes into structures made from metamaterials, which are materials divided into a grid of repeating cells. They also created editing software that helps users build these interactive devices.

“Metamaterials can support different mechanical functionalities. But if we create a metamaterial door handle, can we also know that the door handle is being rotated, and if so, by how many degrees? If you have special sensing requirements, our work enables you to customize a mechanism to meet your needs,” says co-lead author Jun Gong, a former visiting PhD student at MIT who is now a research scientist at Apple.

Gong wrote the paper alongside fellow lead authors Olivia Seow, a graduate student in the MIT Department of Electrical Engineering and Computer Science (EECS), and Cedric Honnet, a research assistant in the MIT Media Lab. Other co-authors are MIT graduate student Jack Forman and senior author Stefanie Mueller, who is an associate professor in EECS and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL). The research will be presented at the Association for Computing Machinery Symposium on User Interface Software and Technology next month.

“What I find most exciting about the project is the capability to integrate sensing directly into the material structure of objects. This will enable new intelligent environments in which our objects can sense each interaction with them,” Mueller says. “For instance, a chair or couch made from our smart material could detect the user’s body when the user sits on it and either use it to query particular functions (such as turning on the light or TV) or to collect data for later analysis (such as detecting and correcting body posture).”

news.mit.edu