Meta AI Introduces ReSkin (A Touch-Sensing “Skin” For AI Tactile Perception Research) Along With A Python Sensor Library To Interface With ReSkin Sensors

Our sense of touch helps us gather information about our surroundings to accomplish our everyday tasks. Despite current advancements in AI research that incorporates vision and sound, touch remains a challenge. This is due to the fact that tactile-sensing data is hard to come by in the outdoors.

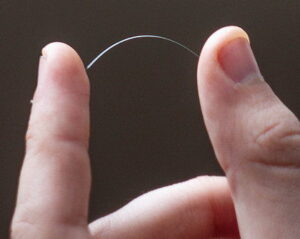

To help researchers advance their AI’s tactile-sensing skills rapidly and at scale, a recent Facebook research in collaboration with Carnegie Mellon University introduces ReSkin, a new open-source touch-sensing “skin.” ReSkin is a low-cost, adaptable, resilient, and replaceable long-term solution that takes advantage of machine learning and magnetic sensing developments. It uses a self-supervised learning technique to auto-calibrate the sensor, allowing it to be generalized and share data between sensors and systems.

ReSkin is a low-cost product, with 100 units costing less than $6 each and larger volumes costing considerably less. It’s 2-3 mm thick and has a temporal resolution of up to 400Hz and a spatial resolution of 1 mm with 90% accuracy, allowing it to handle more than 50,000 interactions. These specs make it suitable for a wide range of form factors, including robot hands, arm sleeves, tactile gloves, and more. For quick manipulation tasks like slipping, tossing, catching, and clapping, ReSkin can also give high-frequency three-axis tactile signals. Furthermore, it can be easily stripped off and replaced when it wears out.

To help researchers advance their AI’s tactile-sensing skills rapidly and at scale, a recent Facebook research in collaboration with Carnegie Mellon University introduces ReSkin, a new open-source touch-sensing “skin.” ReSkin is a low-cost, adaptable, resilient, and replaceable long-term solution that takes advantage of machine learning and magnetic sensing developments. It uses a self-supervised learning technique to auto-calibrate the sensor, allowing it to be generalized and share data between sensors and systems.

ReSkin is a low-cost product, with 100 units costing less than $6 each and larger volumes costing considerably less. It’s 2-3 mm thick and has a temporal resolution of up to 400Hz and a spatial resolution of 1 mm with 90% accuracy, allowing it to handle more than 50,000 interactions. These specs make it suitable for a wide range of form factors, including robot hands, arm sleeves, tactile gloves, and more. For quick manipulation tasks like slipping, tossing, catching, and clapping, ReSkin can also give high-frequency three-axis tactile signals. Furthermore, it can be easily stripped off and replaced when it wears out.

www.marktechpost.com